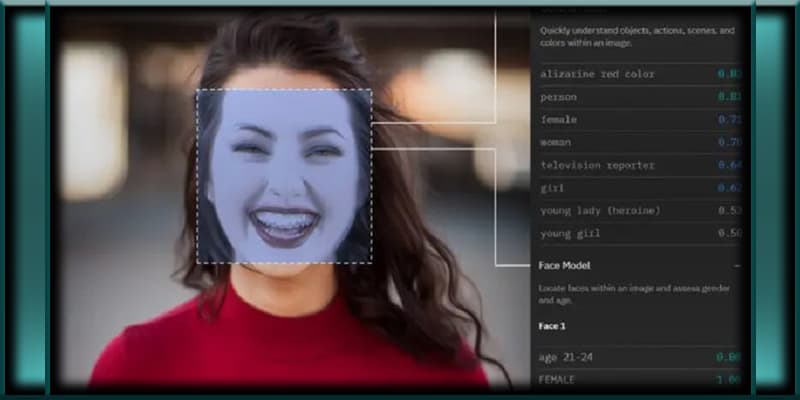

A team of experts from the esteemed University of Harrisburg, PA, has recently unveiled an automated computer facial recognition software that claims to possess an impressive 80 percent accuracy rate in predicting criminal behavior, all while asserting its lack of racial bias. This groundbreaking development has raised concerns and sparked debates about the potential implications of such technology.

The researchers behind this software express their intentions to revolutionize crime prevention, law enforcement, and military applications by automating the identification of potential threats without any prejudice. They are actively seeking strategic partnerships to implement their innovative product.

However, the team’s press release has caused some apprehension due to their choice of words. In a single sentence, they transition from referring to the software’s identification as “likely criminals” to simply “criminals.” This shift in language raises questions about their reliance on the discredited and racist pseudoscience of phrenology, seemingly updated for the modern era.

Public response to this project has not been overwhelmingly positive, as evident from critical comments left on social media platforms like Facebook. Users expressed concerns about the perpetuation of the age-old concept of “born criminals” and questioned whether this software would lead to profiling. Furthermore, some individuals raised the issue of potential bias in the algorithm and the subjective nature of determining who qualifies as a criminal in the first place. These reactions were so unfavorable that the university decided to remove the press release from its official website. However, it can still be accessed using the Internet Wayback Machine.

While the research team claims to eliminate bias and racism from decision-making by relying on an impartial algorithm, it is essential to consider that the code developers and those responsible for determining criminality may harbor their own biases. Why are individuals experiencing homelessness or people of color who are merely “loitering” on sidewalks criminalized, while senators and congresspersons who support wars and regime changes are not? Moreover, who is more likely to be arrested: Wall Street executives engaging in cocaine use in their offices or working-class individuals consuming marijuana or crack? It is observed that the higher an individual’s social standing, the more severe and harmful their crimes tend to be, yet their likelihood of arrest and imprisonment decreases. Black individuals, in particular, face a higher likelihood of arrest for the same offenses as their White counterparts and receive longer prison sentences. These disparities only amplify concerns surrounding the use of facial recognition software, notorious for its inability to accurately identify people of color.

Crime statistics are significantly influenced by the police’s choice of whom to target and the priorities they set. For instance, a recent study revealed that 97.5 percent of individuals arrested in Brooklyn for violating social distancing laws were people of color. Similarly, an analysis of 95 million traffic stops indicated that police officers were more likely to stop black individuals during daylight hours when their race could be easily determined from a distance. However, as dusk approached, this disparity diminished significantly, as a “veil of darkness” shielded them from undue harassment, as researchers have explained. Hence, the demographic of individuals convicted of crimes does not necessarily mirror the population committing them.

Drawing comparisons to the dystopian world depicted in the 2002 film “Minority Report,” where the government’s pre-crime division apprehends potential murderers before they can act, it becomes crucial to question whether an 80 percent accuracy rate justifies the risk of creating a society reminiscent of that fictional world. In such a society, constant surveillance and preemptive arrests based on predicted criminal behavior could become the norm.

The concept of phrenology, a discarded field of study focused on assessing head size and shape, carries a troubling history of dangerous racist and elitist pseudoscience. Cesare Lombroso’s 1876 book, “Criminal Man,” propagated the notion that criminals, savages, and apes shared similar facial features such as large jaws and high cheekbones. Lombroso even claimed that these features indicated a predisposition for evil and a desire to mutilate corpses. Astonishingly, Lombroso, a professor in psychiatry and criminal anthropology, taught these ideas in universities for several decades. According to Lombroso, committing serious crimes was nearly impossible for individuals considered attractive.

The University of Harrisburg’s latest technological advancement appears to be a contemporary version of “algorithmic phrenology,” repackaging an alarming concept for the 21st century. The fact that they are marketing this technology to law enforcement agencies as an impartial tool for societal benefit adds another layer of concern.

In conclusion, while the development of facial recognition software capable of predicting criminal behavior seems like a significant breakthrough, its potential implications and ethical considerations cannot be ignored. As discussions surrounding bias, racial profiling, and the subjectivity of criminality continue, it is crucial to approach such advancements with caution and ensure that they are used responsibly, without perpetuating harmful stereotypes or infringing on individuals’ rights.